cognitive convergence

what happens when ai shapes human thought at scale?

I remember the first time someone in California said, “Oh, you’re from a flyover state? I’m sorry.” I chuckled. I wasn’t offended; many on the coasts just hadn’t spent time in places like Wisconsin, where I grew up.

What resonated with me was the reflexive assumption that a flyover state wasn’t worth visiting, or perhaps even understanding.

Lately, I’ve questioned whether we’re encoding this reflex into AI systems—not overt bias, but a quiet pull toward the norm. Based on its training data, a model infers a normal way to judge, reason, and interact. That same reflexive assumption about what’s worth understanding can be learned and reinforced.

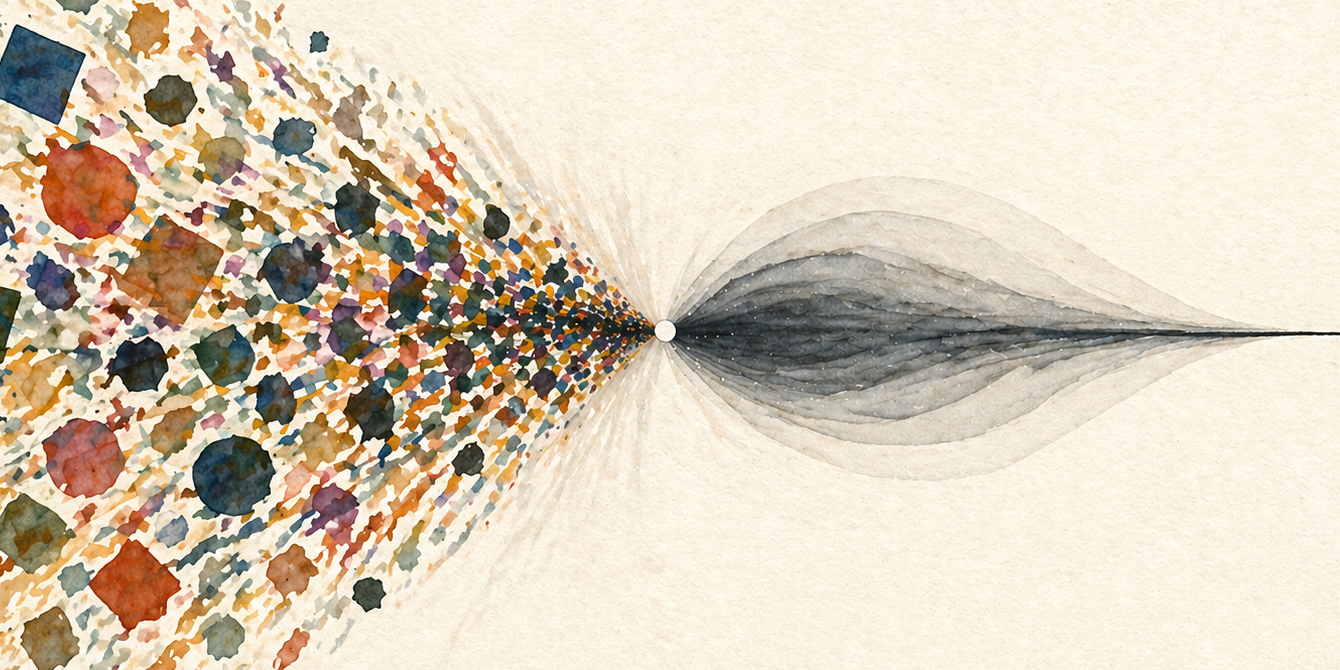

It’s a form of compression: reducing a wide range of thought into a smaller set of patterns, and assuming what’s lost doesn’t matter–an assumption AI systems risk operationalizing at scale.

What effect will this have on creativity and diversity of perspective? What happens as we outsource more of human judgment to a large, opaque system?

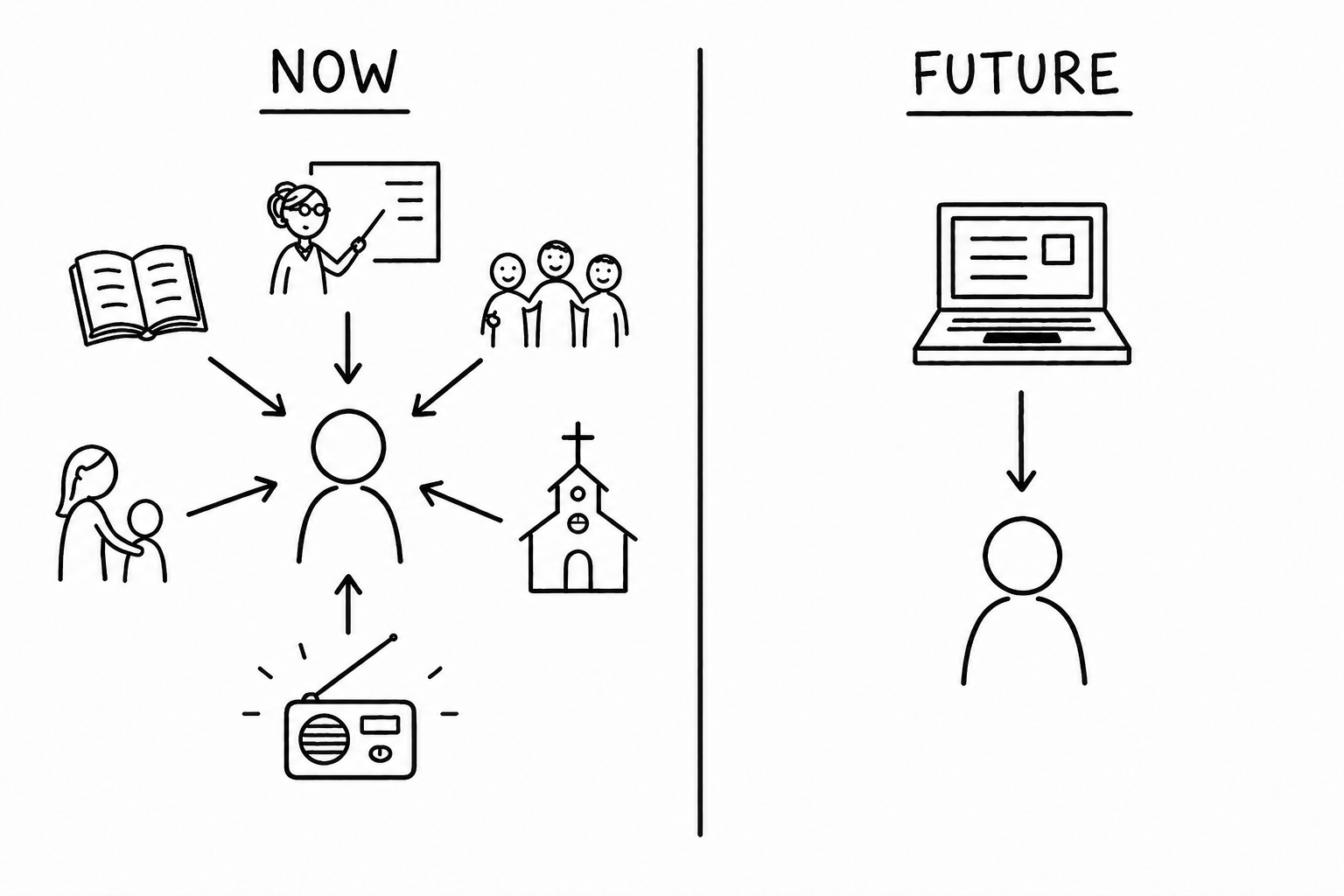

Until recently, platforms saw only slices of a person. LinkedIn captured professional identity. Facebook, social identity. Amazon, consumer habits. Now a single interface (e.g., ChatGPT) is gaining intimate access to all slices—professional projects, family health concerns, and private anxieties.

As a chatbot, AI synthesizes information and offers suggestions. Unlike search or social media, which surface multiple sources for users to interpret, these systems collapse them into a single response. This shifts the act of synthesis from the user to the model, and with it, part of the judgment. As systems become more agentic, that role expands further: AI will make decisions and take action; mediate relationships; and increasingly sit between individuals and the institutions that govern their lives.

There are early signs that this shift compresses variation. When writers use generative AI, their collective work becomes less diverse (source). When models train on their own outputs, minority patterns recede (source).

So at first, essays become smoother. Arguments follow similar patterns. Over time—as people rely on the same system to frame problems and generate responses—human thinking may converge, too.

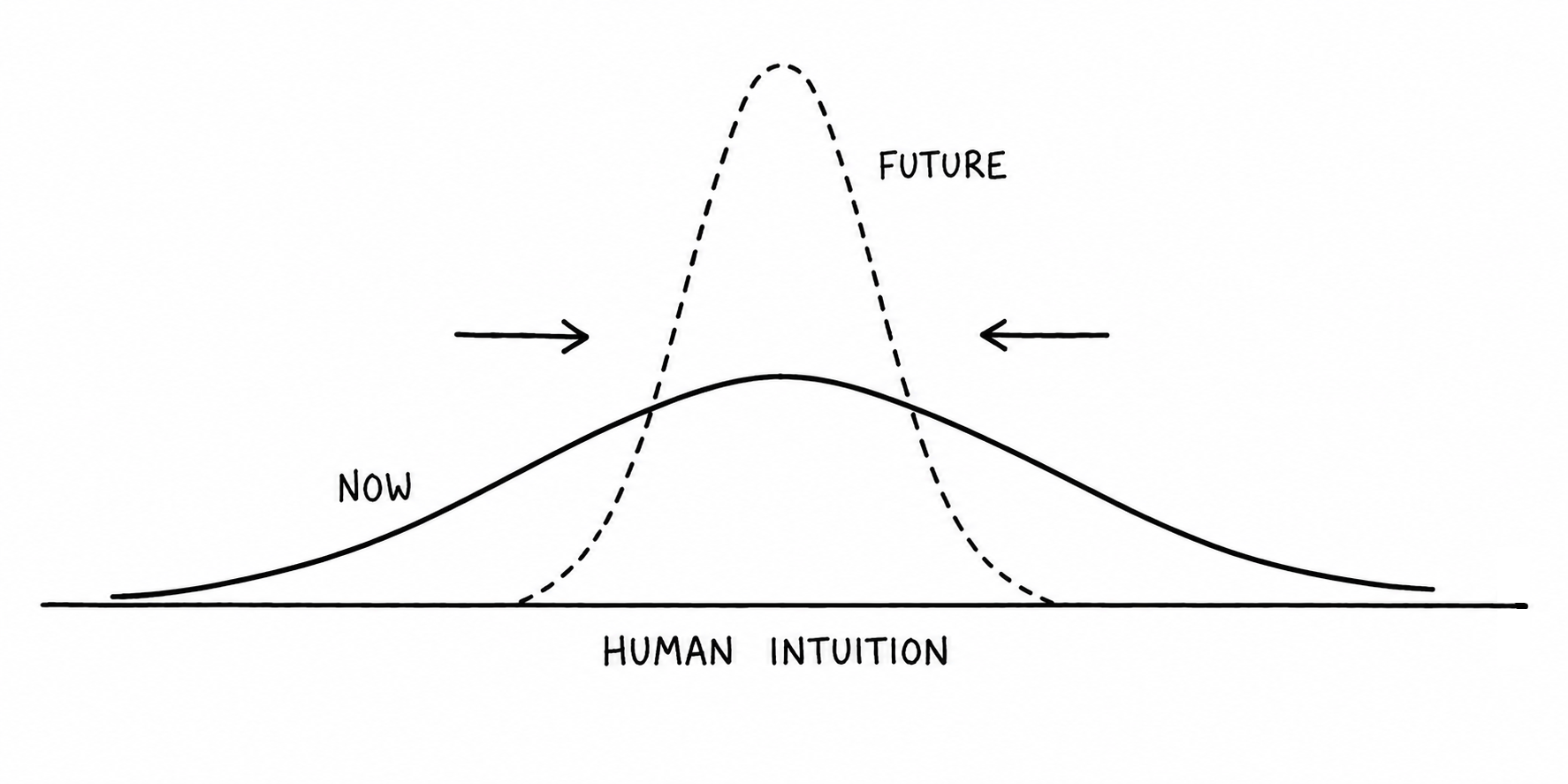

If a large group of people develop their reasoning inside the same system, the risk isn’t homogenized outputs; it’s homogenized intuition.

In machine learning, this narrowing is called mode collapse. Drawing an analogy to collective human thought, I call it cognitive convergence.

The cost of that convergence is not evenly distributed. Systems perform best on what they see most, so underrepresented people and situations are more likely to be misinterpreted or oversimplified.

And the center they converge toward is not neutral. It reflects dominant patterns in the data and the constraints placed on the system. The “average” answer is not what humanity thinks—it is what the system—shaped by complex incentives—can reliably produce within those bounds.

This is where alignment shows up—not as systems pursuing alien goals, but as ones that systematically favor certain framings over others. It doesn’t tell us what to think. It narrows the range of ways we think.

Earlier debates about fairness in AI focused on discrete decisions. For example: given an applicant’s financial context, should a loan be approved? The outcome was binary and concrete.

The problem now becomes less visible and harder to measure. In common workflows such as applying for a loan: AI drafts the application, shapes how the applicant tells their story, summarizes that narrative for review, and assists the lender in evaluating risk. At each step, context is standardized.

The risk is a loss of resolution—the inability to represent context that falls outside the norm. As systems become more compressed, institutions inherit those limitations through the tools they rely on for decision-making.

So what can we do about it?

I don’t yet have a solution—nor even a full understanding of the problem. Indeed, I could argue this isn’t a problem at all (see counterpoint). But a few directions are worth exploring.

At the product level, there’s value beyond single-answer outputs. Systems can communicate assumptions and present diverging perspectives. At a time when our social feeds and public discourse have skewed toward polarized or simplified narratives, this matters. It could help us understand—rather than compress—human differences.

At the individual level, we must retain the ability to form judgments independently. We shouldn’t default to the system for every question. Forming intuition requires experience, dialogue, and struggle.

There is also upside. In principle, these systems could expand our exposure to different ways of thinking—across cultures, disciplines, and lived experiences. But that is not the default; left to scale, efficiency, and commercial incentives, they converge toward what is most common and easiest to serve. Expansion requires deliberate design.

The goal is not to keep AI out of human life. It is to prevent human judgment from being compressed into one large, shared system.

The next time someone jokes about a flyover state, I’ll still chuckle. But what I’d prefer is curiosity—not because Wisconsin needs defending, but because curiosity resists compression.

Curiosity is the instinct to assume meaning exists outside the default—and to seek that out, before it disappears.

Counterpoint

One could argue this process runs in reverse. Rather than compressing the range of human thought, AI absorbs the repetitive, standardized parts of reasoning and frees people to focus on what is novel, ambiguous, and creative. In this view, the system expands intuition. By removing the tedium of synthesis and recall, AI creates more space for exploration, intuition, and new ideas.

Both interpretations may be true in different contexts, but the stakes are high enough that we should thoughtfully consider how these systems are designed and used—monitoring whether they expand or compress how we think.

Published April 2026